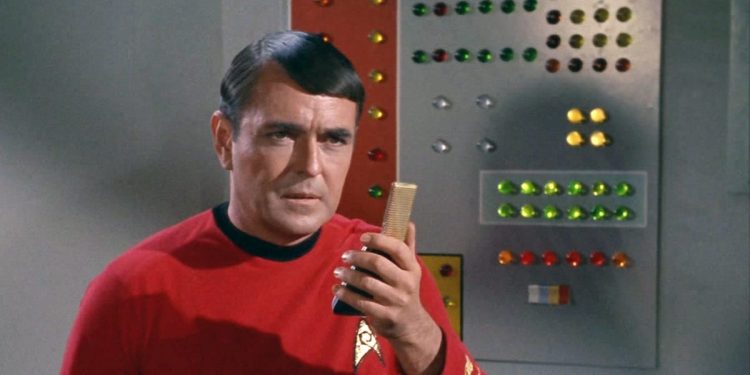

In Star Trek, the Starship company had a chief engineer, Montgomery “Scotty” Scott, who had to regularly explain to Captain Kirk that certain things were impossible to achieve, due to practices such as the laws of physics.

“The Cannae engines take it, captain!” is a famous quote that the actor may not have said in the television show. But you have the idea.

We may be tackling such a moment in the technology industry at the moment, because the tendency of the AI agent is growing.

The field begins to go from relatively simple chatbots to more capable AI agents who can do complex tasks independently. Is there enough calculation power to maintain this transformation?

According to a recent Barclays report, the AI industry will have sufficient capacity to support 1.5 to 22 billion AI agents.

This could be enough to revolutionize the work of white passes, but an additional calculation power may be necessary to manage these agents while satisfying the demand of Chatbots consumers, explained Barclays analysts in a note to investors this week.

Everything is on the tokens

AI agents generate much more tokens by user query than traditional chatbots, which makes them more expensive in calculation.

Tokens are the language of generative AI and are at the heart of emerging pricing models in industry. The AI models break down words and other digital tokens inputs to make them easier to treat and understand. A token is about ¾ of words.

The more powerful AI agents can rely on “reasoning” models, such as O1 and O3 of Openai and R1 from Deepseek, which break the queries and tasks in more manageable pieces. Each stage of these chains of thought creates more tokens, which must be treated by servers and AI chips.

“Most of the agent’s products work on mostly 25 times more token models per request from Chatbot products,” the Barclays analysts wrote.

“Super agents”

OPENAI offers a chatgpt pro service that costs $ 200 monthly and operates its latest reasoning models. Barclays analysts believed that if this service used the O1 model of the startup, it would generate around 9.4 million tokens per year per subscriber.

There have recently been reports with the media that OPENAI could offer even more powerful AI agent services that cost $ 2,000 per month, or even $ 20,000 per month.

Barclays analysts call them “super agents” and estimated that these services could generate 36 to 356 million tokens per year, per user.

More chips, captain!

It is a breathtaking amount of tokens that would consume a mountain of computing power.

The AI industry should have 16 million accelerators, a type of AI chip, online this year. About 20% of this infrastructure can be dedicated to IA inference – essentially the computing power required to execute AI applications in real time.

If agent products take off and are very useful for consumers and business users, we will probably need “many more inference chips”, warned Barclays analysts.

The technological industry may even need to reuse certain chips that were previously used to form AI models and also use them for inference, also added analysts.

They also predicted that cheaper, smaller and more effective models, such as those developed by Deepseek, will have to be used for AI agents, rather than more expensive proprietary models.

businessinsider